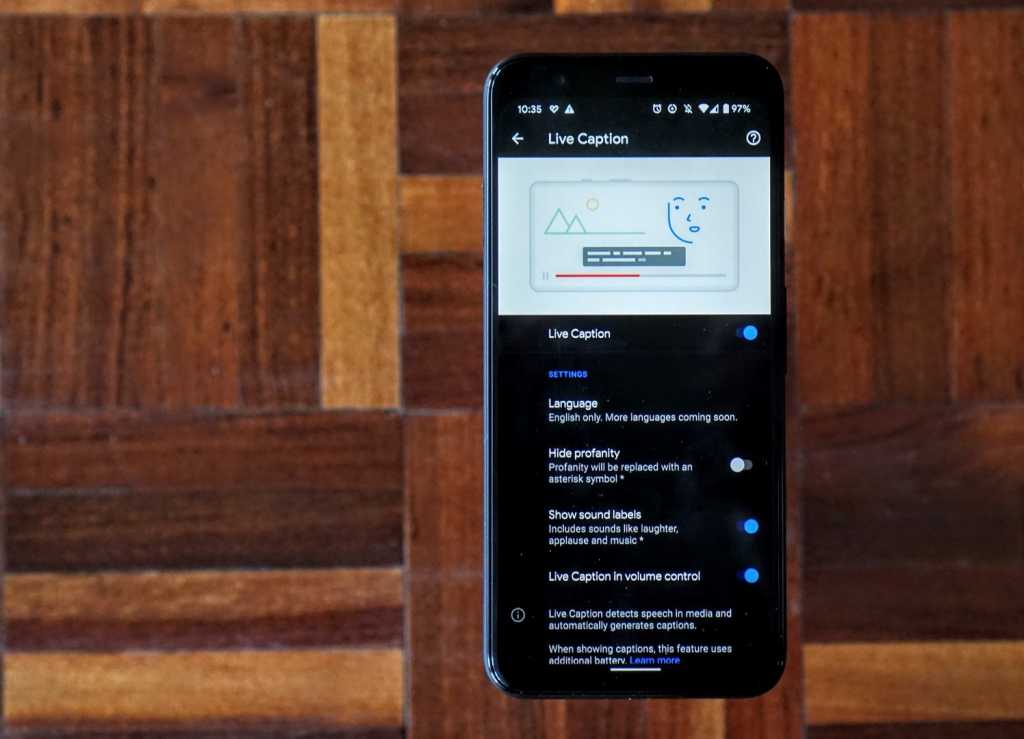

Under Settings, you can find or change these settings: Turn Live Caption on or off. ETC acts as a convener and accelerator for entertainment technology and commerce through: Research, Publications, Events, Collaborative Projects and Shared Exploratory Labs and Demonstrations. Change Live Caption settings On your device, open Settings. As an organization within the USC School of Cinematic Arts, ETC helps drive collaborative projects among its member companies and engages with next generation consumers to understand the impact of emerging technology on all aspects of the entertainment industry, especially technology development and implementation, the creative process, business models, and future trends. You can do this before any audio is playing or you can wait until you need captions to turn it on. The Entertainment Technology Center at the University of Southern California is a think tank and research center that brings together senior executives, innovators, thought leaders, and catalysts from the entertainment, consumer electronics, technology, and services industries along with the academic resources of the University of Southern California to explore and to act upon topics and issues related to the creation, distribution, and consumption of entertainment content. There are two ways to activate Live Caption. The Live Transcribe speech engine also “contains a text formatting library for visualizing ASR confidence, speaker ID, and more.” “Opus, AMR-WB, and FLAC encoding can be easily enabled and configured,” explains VB. The encoder increases bitrate just enough so that ‘latency is visually indistinguishable to sending uncompressed audio.’” Live Caption will add captions to whatever you’re watching or attempting to listen to on your phone. For example: “To reduce latency even further than the Cloud Speech API already does, Live Transcribe uses a custom Opus encoder. For example, Android versions come natively with Live Caption. Google evaluated audio codecs such as FLAC, AMR-WB and Opus, which all had different pros and cons based on different conditions. It also “buffers audio locally and then sends it upon reconnection,” notes VB. Google’s speech engine closes and restarts to accommodate for pauses and silence. Today, we are sharing our transcription engine with the world so that developers everywhere can build applications with robust transcription.” (The source code is available on GitHub.) (Live Caption will be exclusive to select Android Q devices.)Īccording to the Google Open Source Blog, “relying on the cloud introduces several complications - most notably robustness to ever-changing network connections, data costs, and latency. It is available on 1.8 billion Android devices. Live Transcribe allows users to type responses back on the screen. “Unlike Android’s upcoming Live Caption feature, Live Transcribe is a full-screen experience, uses your smartphone’s microphone (or an external microphone), and relies on the Google Cloud Speech API,” reports VentureBeat. Live Transcribe can transcribe speech in more than 70 languages and dialects into captions in real-time.

Live Transcribe, launched in February, is a tool that uses machine learning algorithms to convert audio into captions. The company recently open-sourced the speech engine used for Live Transcribe, its Android speech-to-text transcription app designed for those who are deaf or hard of hearing, and posted the source code on GitHub. In the beginning, it will be limited, but we’ll roll it out over time,” Brian Kemler, Android accessibility product manager told Venturebeat.Google is looking to help developers create real-time captioning for long-form conversations in multiple languages. :max_bytes(150000):strip_icc()/live-caption-video-twitter-61a71e20f6f0470b9039d0c2917fab59.png)

This requires a lot of memory and space to run. It’s only going to be on some select, higher-end devices. Google, however, passes the blame to memory and storage limitations. The software giant is finally expanding such a useful accessibility feature that will help differently-abled people use smartphones better.ĭespite being touted as one of the major features of Android 10 on its official landing page, it is disappointing to see Google rolling out the feature in phases – bringing it to third-party phones at a time when we are looking forward to Android 11. Google initially introduced Live Captions to the Pixel 4 and brought it to other Pixel devices later through its ‘ Pixel Feature Drop‘. Live Captions are available system-wide and hence, you are not limited by the apps you use. The feature transcribes the audio portion in videos, podcasts, social media content, and more to show you relevant subtitles. In case you’re out of the loop, Google announced the Live Captions feature for Android 10 back at Google I/O 2019 last year.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed